Conversational Stats - a way to talk to your data

Leverage the power of Dialogflow & connect your data sources with simple Apps Script functions to experience the next-gen approach towards consuming data and shaping insights.

participated in this year's first hackathon (hosted at work) where i teamed up with arjun mahishi on hacking "statistix" - that's what we named our project which entailed being able to talk to your stats.

context

these were some of my rationale while i came up with the idea to build something that might not have made perfect sense to everyone, at first -

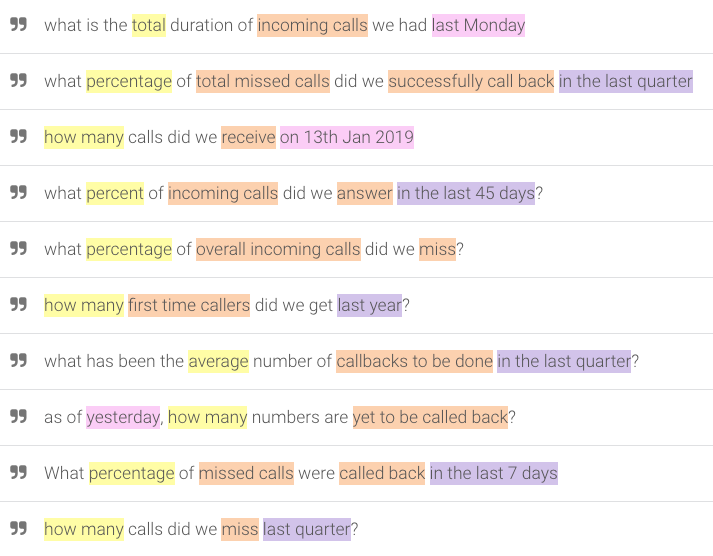

- a solution where you can talk to your data instead of building charts or plotting pivots/graphs makes it easier to answer questions like these…

- independence from predefined calculations

- in my experience, it sometimes so happens that you enter a meeting armed with all the calculations and visualisations that you think are relavent for the discussion and yet, end up encountering questions that require you to rethink/refactor what's being presented. now imagine if the stakeholders had instant, direct access to get answers against newer, more complex questions all by themselves - one might never have to "prepare" the data again, at all

- better speed and improved efficiency

- i think this bit speaks for itself where there's a good possibility that while we as humans might at times make erroneous calculations somewhere along the road, it's highly unlikely for us to get inaccurate answers from existing datasets via a machine + with the ability to converse with the data, one could also do away from spending hours wrangling them to the needs of the situation

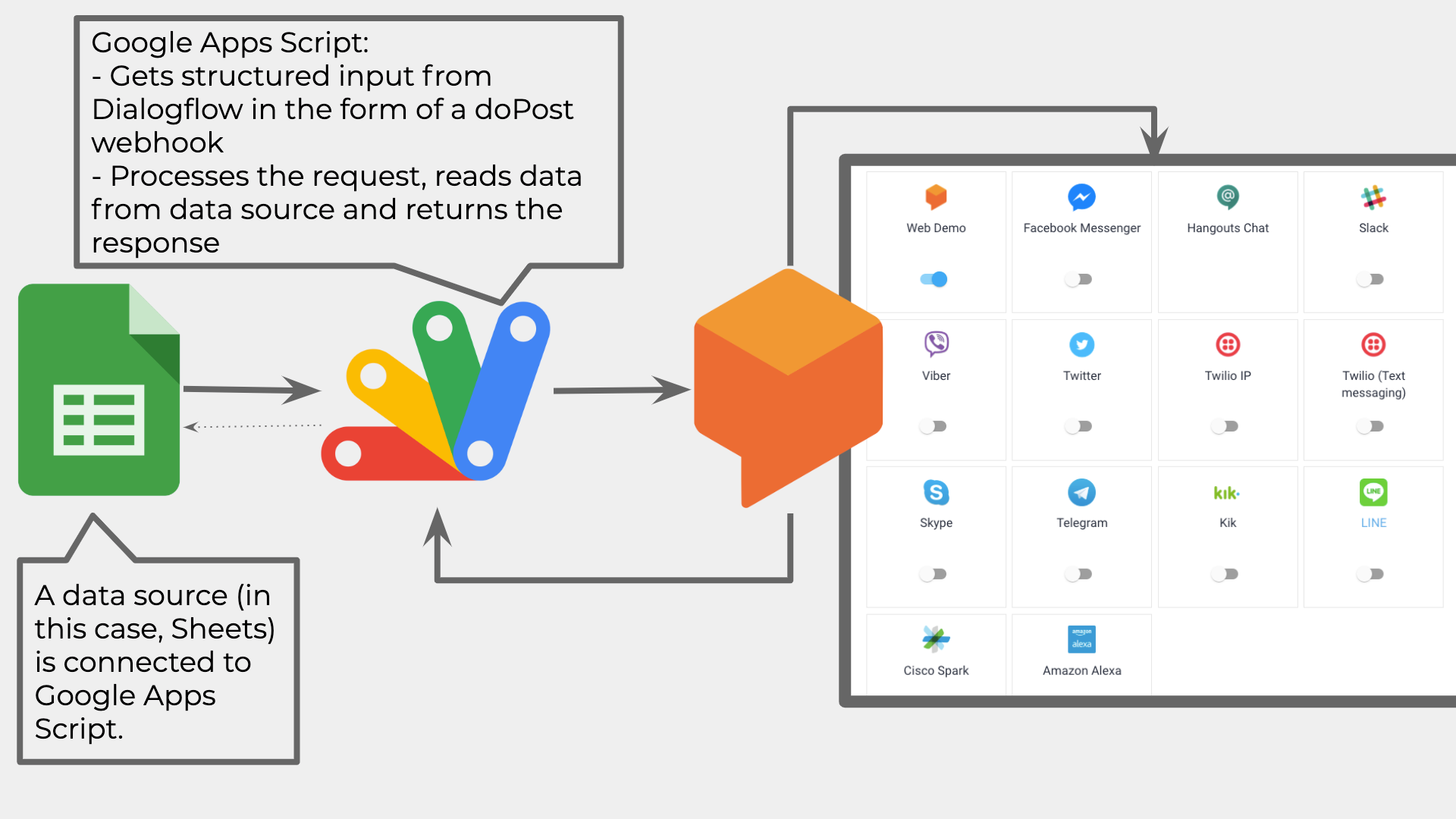

architecture

what would truly have been great is if we could get the "calculations" part on an automated, ai/ml path but that's not what we ended up achieving but instead, with the help of dialogflow, we were able to get conversational input in a more structured manner (intents), which then was processed as an incoming webhook, doPost request from within google apps script that read the data from a spreadsheet and spit out an output in the form of a response.

learnings

- it was paramount that we had all forms of input that we could think of to be added as part of training data within dialogflow via 'intents' - as even though it understood the context and tagged 'em with its respective entities right away, it crapped out during tests had such test cases already not been registered within its intent

- as much as we searched and hoped for, the response from dialogflow was not automatically a "conversational" output.

- meaning: if we entered a question where the response was just a number, the expectation was that it would "speak" out that output in the form of a sentence

- example question: "how many total incomnig calls did we receive yesterday?"

- expected response: you received 42 calls as on 25th of january, 2020

- actual response: 42 (which is what the script was programmed to return)

demo

presentation

you can view the entire slide here.